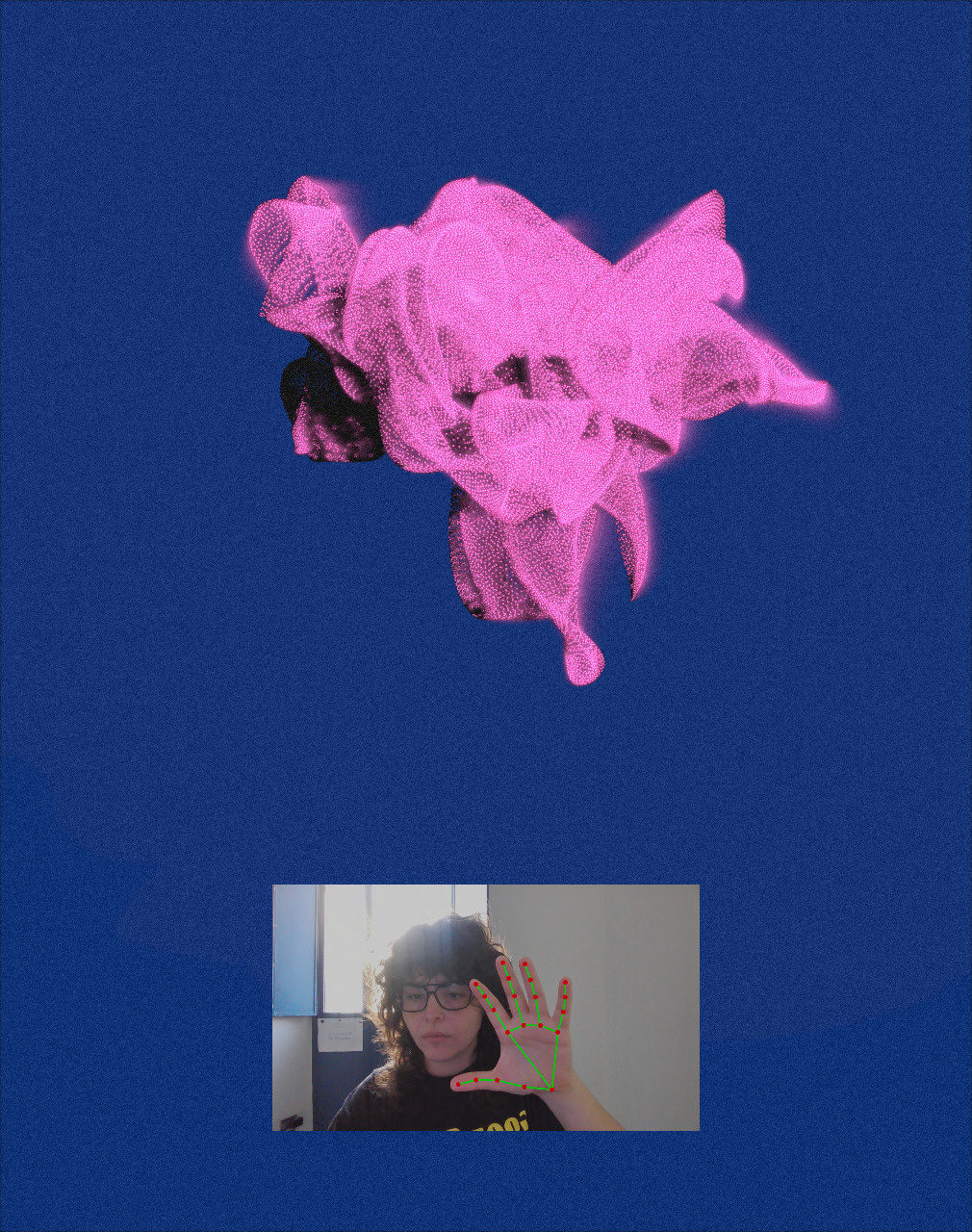

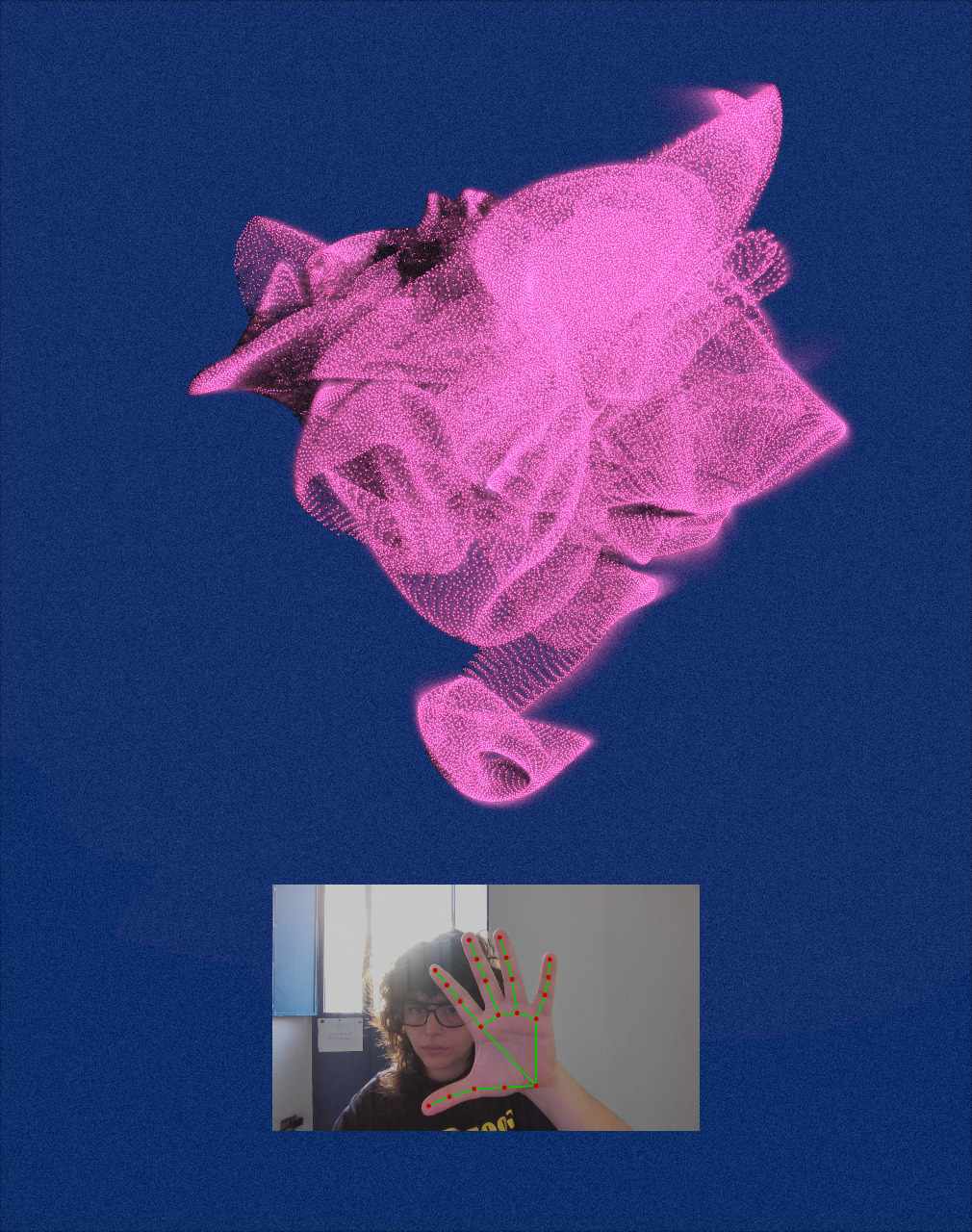

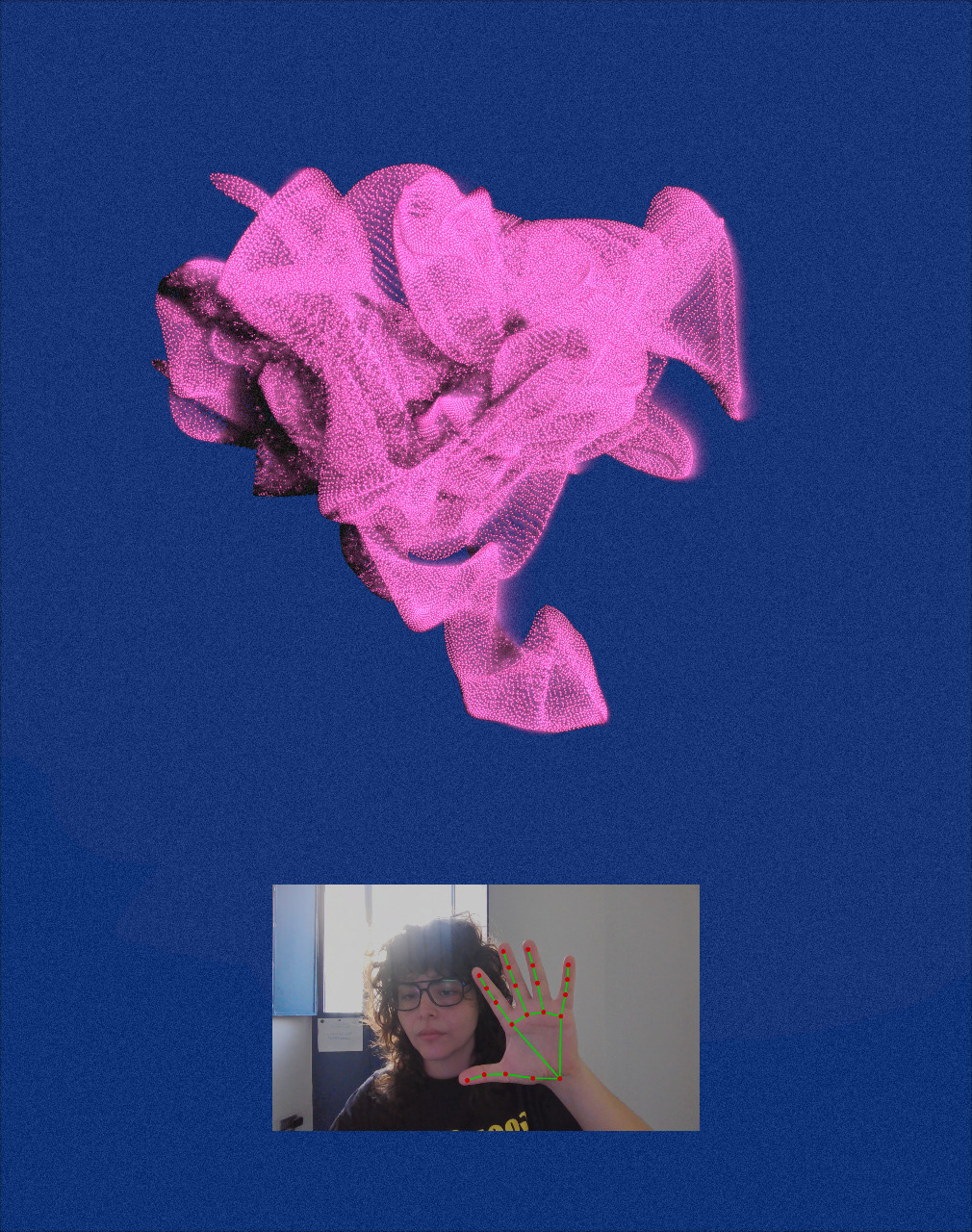

Controlling sound and point clouds through gestures using MediaPipe, an AI-powered machine learning framework for real-time hand tracking and gesture recognition. In this project, MediaPipe captures hand movements and translates them into interactions with a digital flower, modifying its shape, colors, and sounds.

🔊 Sound on

🔊 Sound on

This piece uses a digital flower that visually distorts as you get closer, symbolizing how human presence can alter the essence of the world that surrounds us.

With Media Pipe, not only does the flower change shape, but gestures also control the sounds and colors, creating a multi-sensory interaction.

my Tech role >

Interaction and computer vision development

Calibrated and configured the Media Pipe AI model to enable interactive hand gesture recognition, transforming a point cloud in real time.

my Art role >

Generative and interactive video content creation.

Interaction design.

Interaction and computer vision development

Calibrated and configured the Media Pipe AI model to enable interactive hand gesture recognition, transforming a point cloud in real time.

my Art role >

Generative and interactive video content creation.

Interaction design.